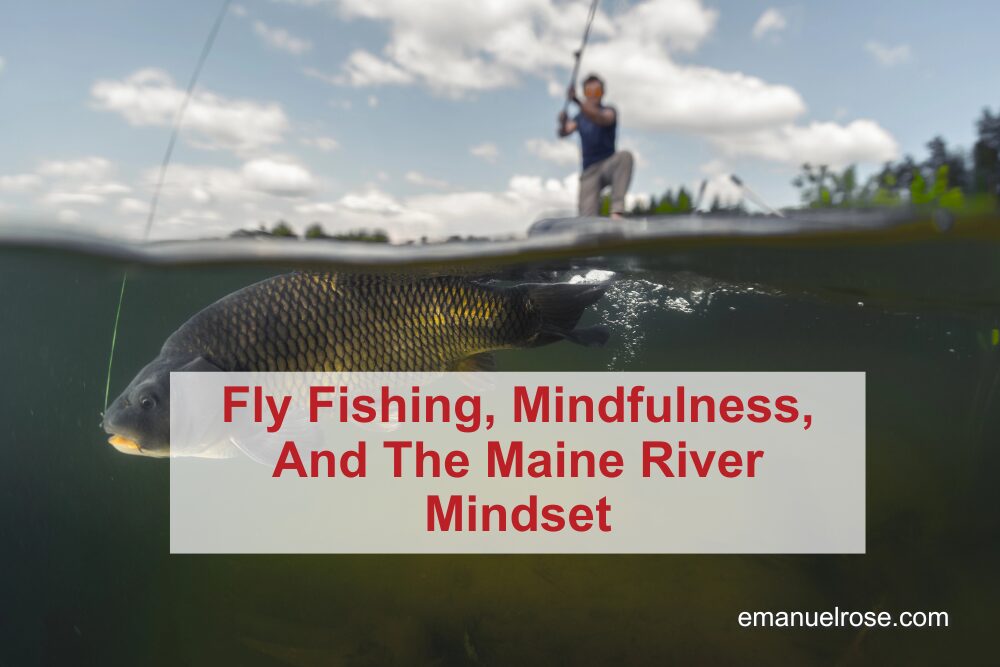

Fly Fishing, Mindfulness, And The Maine River Mindset

Time on wild water is less about the number of fish and more about deepening your relationship with place, self, and the people you share it with. Sawyer Deroche’s life on Maine rivers offers a simple directive: put in the time outside, and the clarity, connection, and growth will follow. Schedule regular “no-phone” river or park walks where the only goal is to notice water, trees, light, and wildlife. Shift outdoor goals from trophies and tallies to a single question: “What do I want to feel and learn out here today?” Use Sawyer’s rule of thumb: if you want memorable encounters (with wildlife or insight), you must consistently “put time on the water.” Include younger people on your trips so they form their own bond with wild places and become future stewards. When you’re outside, periodically pause, look around, and ask, “What here is depending on this river or forest?” to build a sense of interconnectedness. Plan at least one annual outing that stretches you—new water, a longer float, or a guided trip—to refresh your perspective. Let guides and experienced outdoors people design the day around immersion and beauty, not just catching or checking boxes. The River Immersion Loop: Six Steps From Screen To Stream Step 1: Answer the invitation to step away from screens and back into moving water, even if that just means a short walk along a local river. The act of physically leaving the noise of your routine is the first step toward a different state of mind. Step 2: Arrive with intention, not agenda. Like Sawyer’s ideal clients, decide that your primary goal is to experience the place—its light, sounds, current, and wildlife—rather than to “rack up” outcomes. Step 3: Stay long enough for the river to show you something. Big fish, eagles, or a sturgeon at your feet only appear to people who keep coming back; the same goes for personal insight and calm. Step 4: Notice interconnectedness in real time. Watch migratory fish in the Kennebec, moose at a brook, or herring chased by eagles, and let that web of life remind you that you are part of, not separate from, the ecosystem. Step 5: Share the experience with someone else—especially a younger person. Bringing a student, child, or grandchild on the water multiplies the impact and keeps the guiding tradition of stewardship alive. Step 6: Return home with a small, concrete commitment: more time outside, less phone time, or one new place to explore. Then repeat the loop until a nature-centered lifestyle becomes your default rather than an occasional escape. Guided Rivers vs. Guided Life: A Wilderness Comparison Aspect On A Maine-Guided Float In Daily Life Practical Takeaway Goals Experience beauty, learn about the river, and catch some fish along the way. Hit metrics, check tasks, rush from one obligation to the next. Redefine success as depth of experience, not just volume of output. Navigation Use maps, a compass, and local knowledge to move safely through wild water. Rely on habits and notifications, often without clear direction or reflection. Build your own “map and compass” through values, quiet time, and planning. Time Investment Accept that memorable encounters require repeated trips and long days. Expect immediate results from short bursts of attention. Apply the “time on the water” mindset to personal growth and leadership—show up consistently. Questions From The Current: Reflections For A Nature-Led Life How does shifting focus from catching fish to experiencing the river change your state of mind? When you stop measuring success by length and numbers, pressure drains away, and curiosity takes its place. You begin to notice wind on the water, bird movement, and your own breathing, which anchors you in the present instead of in expectations. What can a seven-foot sturgeon at your feet teach you about perspective? Sharing water with a massive, ancient fish reminds you that you’re a brief visitor in a much longer story. That realization can shrink daily worries down to size and invite more humility, gratitude, and awe into your decisions. Why is “time on the water” the real secret, in fishing and in growth? There is no magic fly that replaces accumulated hours of presence and practice. Whether you’re building a business, a family, or a skill, steady contact with the work—showing up again and again—is what creates breakthroughs. How does watching wildlife in its own habitat affect your sense of connection? Seeing moose, eagles, or shad simply living their lives erodes the illusion that humans sit above or outside nature. It can revive a sense of kinship and responsibility that pushes you to protect watersheds, forests, and the species that rely on them. What happens when you bring a younger person onto the water with you? You’re not just sharing a pastime; you’re opening a doorway to wonder, resilience, and stewardship. That shared experience can shape their identity and, over time, build communities that care enough to fight for wild places. Author: Emanuel Rose, Senior Marketing Executive, Strategic eMarketing Contact: https://www.linkedin.com/in/b2b-leadgeneration/ Last updated: John McPhee’s book “The Founding Fish,” which captures the spirit and story of American shad. Maine’s Registered Guide testing system, with its emphasis on law, safety, and map-and-compass navigation. Wilderness-tripping practices at camps like Kieve/Wavus help teenagers build confidence and resilience outdoors. Fly fishing traditions focused on brook trout, landlocked salmon, and migratory fish on the Kennebec River. Spiritual and transcendentalist reflections rooted in thinkers like Thoreau, applied to modern river time and mindfulness. About Strategic eMarketing: Strategic eMarketing helps values-driven organizations translate authentic stories and nature-based leadership lessons into measurable marketing results, serving brands that want a deeper, more human connection with their audiences. https://strategicemarketing.com/about https://www.linkedin.com/company/strategic-emarketing https://podcasts.apple.com/us/podcast/nature-bound-with-emanuel-rose/id1741980361 https://open.spotify.com/show/6v7x8XOUfUQDdAlloCoo0h https://www.youtube.com/channel/UC7Ax4n0g6_Y4SJRlC470wEg Guest Spotlight Guest: Sawyer Deroche LinkedIn: Not currently active (per guest) Company: Central Maine Fly Fishing and Adventures, LLC — Registered Maine Guide services for fishing, canoeing, and recreation in and around Waterville, Maine. Email: centralmaineflyfishadventures@gmail.com Episode: Nature Bound with Emanuel Rose, a conversation focused on guiding traditions, Maine rivers, and building a fly-fishing hub

Fly Fishing, Mindfulness, And The Maine River Mindset Read More »